Smart glasses penetrate through school systems

**Disclaimer: A student chose to remain anonymous due to privacy concerns regarding his actions.

On November 9th, 2025, at Yonsei University, an online midterm for the “Natural Language Processing and ChatGPT” course enrolled about 600 students. Half of the enrolled students confessed to cheating. Despite rules requiring screen, hand, and face records, students manipulated camera positions to access instant AI-generated answers.

Across South Korea, universities are facing widespread AI-assisted academic misconduct. At Korea University, a general education course with roughly 1,400 students had its midterm invalidated after all test-takers shared screenshots of exam questions via group chats and Large Language Models (LLMs), such as ChatGPT and Gemini.

Large-scale cheating cases already stretch universities’ abilities to monitor exams. But those incidents involve computers and phones. A college student in Tokyo snapped photos of his Waseda University entrance exam with his smart glasses and posted the questions online for instant answers. This case exposed a new reality for schools. A new problem emerges with technology on students’ faces.

Meta launched the second generation of Ray-Ban smart glasses that feature enhanced camera and video capabilities, especially with Meta AI integration. The company designed the glasses to deliver built-in conversational capabilities via voice commands and multimodal Artificial Intelligence that analyzes what the user sees and answers their questions.

The Wayfarer frames contain a small camera activated by a button that notifies others of a photo or video with a subtle flash. The user can then listen to the device’s short answers through the internal speaker. While the glasses feature a security function that prevents recording when the indicator is covered, most people fail to notice or understand what the light means.

At first glance, the Ray-Ban smart glasses look like ordinary eyewear. However, their built-in multimodal AI enables users to effortlessly access information, raising concerns in academic dishonesty.

As these AI-enabled devices blend in with regular eyewear, detecting them in exams becomes harder. Secondary principal Dr. Willette warns of the dangers of these wearable technologies. He believes academic honesty results from authentic testing strategies, “designing assessments that don’t require memorization but instead require students to demonstrate their skills through practice.”

Despite concerns, the AI glasses currently lack displays. Meta plans to release a pair of glasses with a display embedded in the lenses to enable intuitive interaction with the device. Still, the previous pairs include a small built-in computer with the latest Artificial Intelligence that behaves like a chatbot and works without a smartphone.

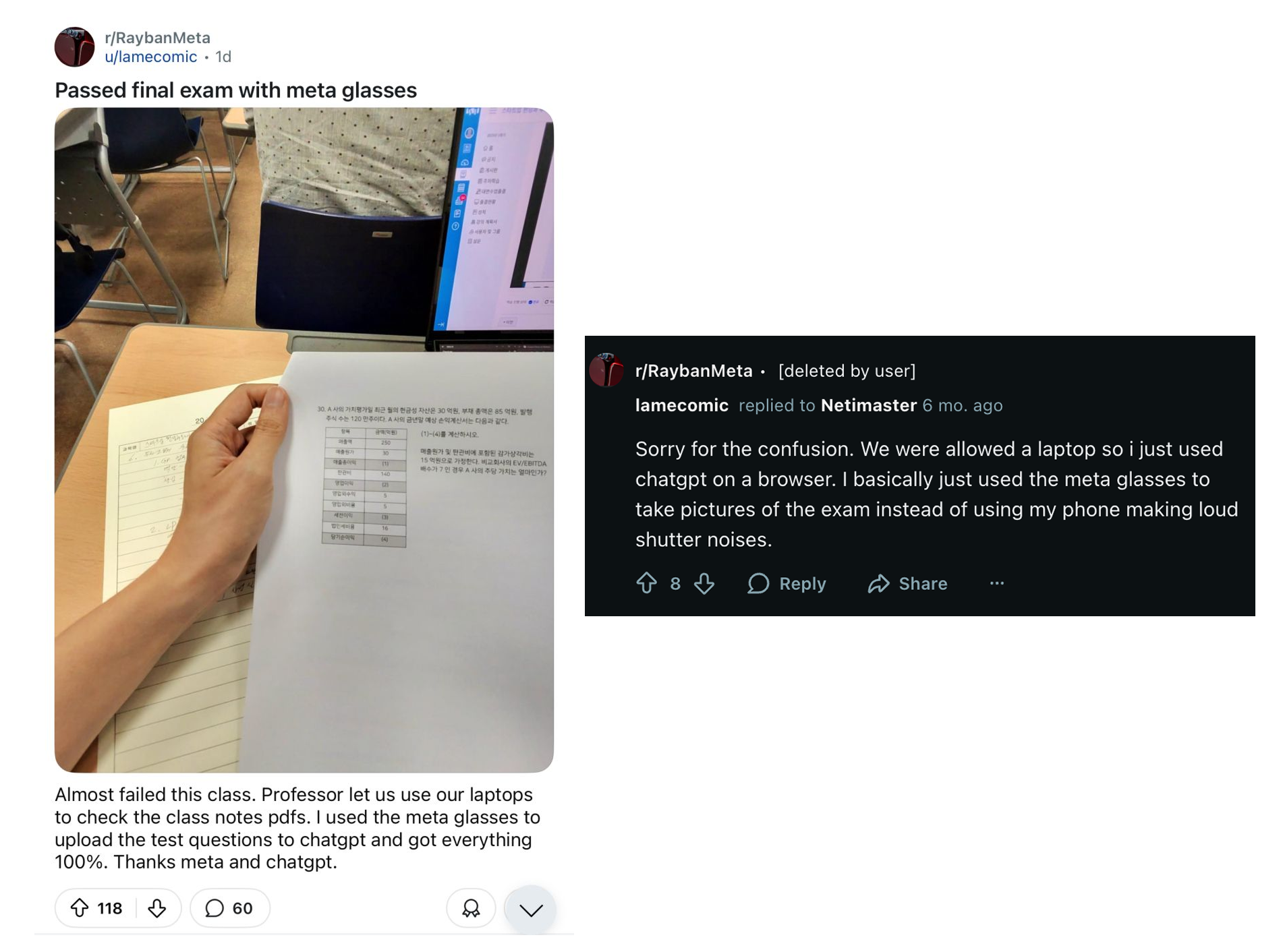

Students share using AI-enabled glasses to take photos of test papers. The glasses help them feed questions to chatbots during exams.

A student attending Semyung University admitted, “During the exam, many of us quietly typed questions into AI tools on our computers. The teacher said using ChatGPT would be up to our own conscience.” He used his glasses to take a picture of the test and post it to his computer, “because the glasses look so ordinary.”

With increasing students’ reliance on AI, Dr. Willette said, “We don’t want to ban AI or take away freedom, but we must regulate and monitor it to ensure it’s not causing harm.” He believes that “like [how] we regulate food for safety, we need regulations for technology. We’re not doing that as a society, as a school right now, and that needs to change.”

Originally published in Jets Flyover